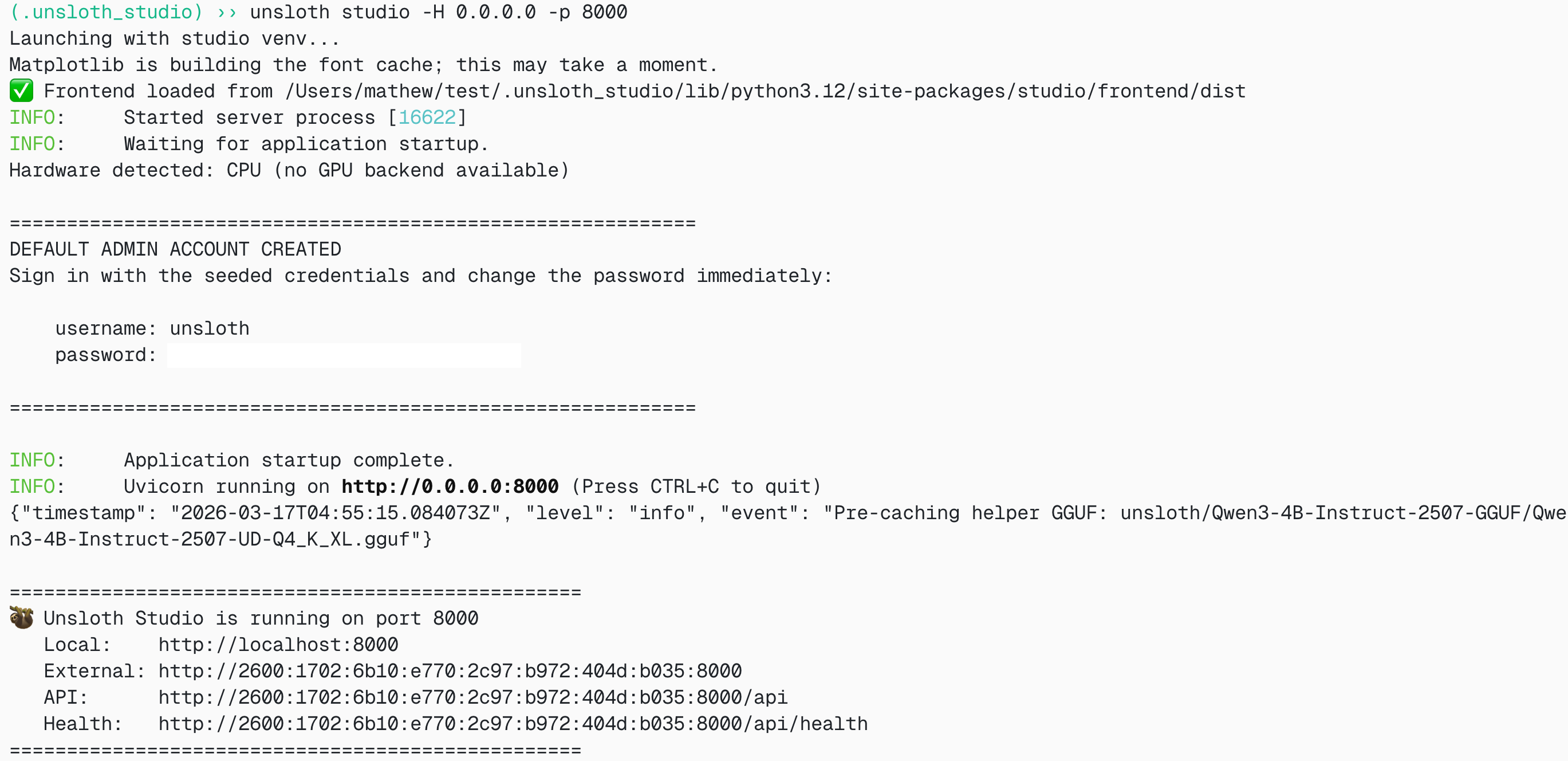

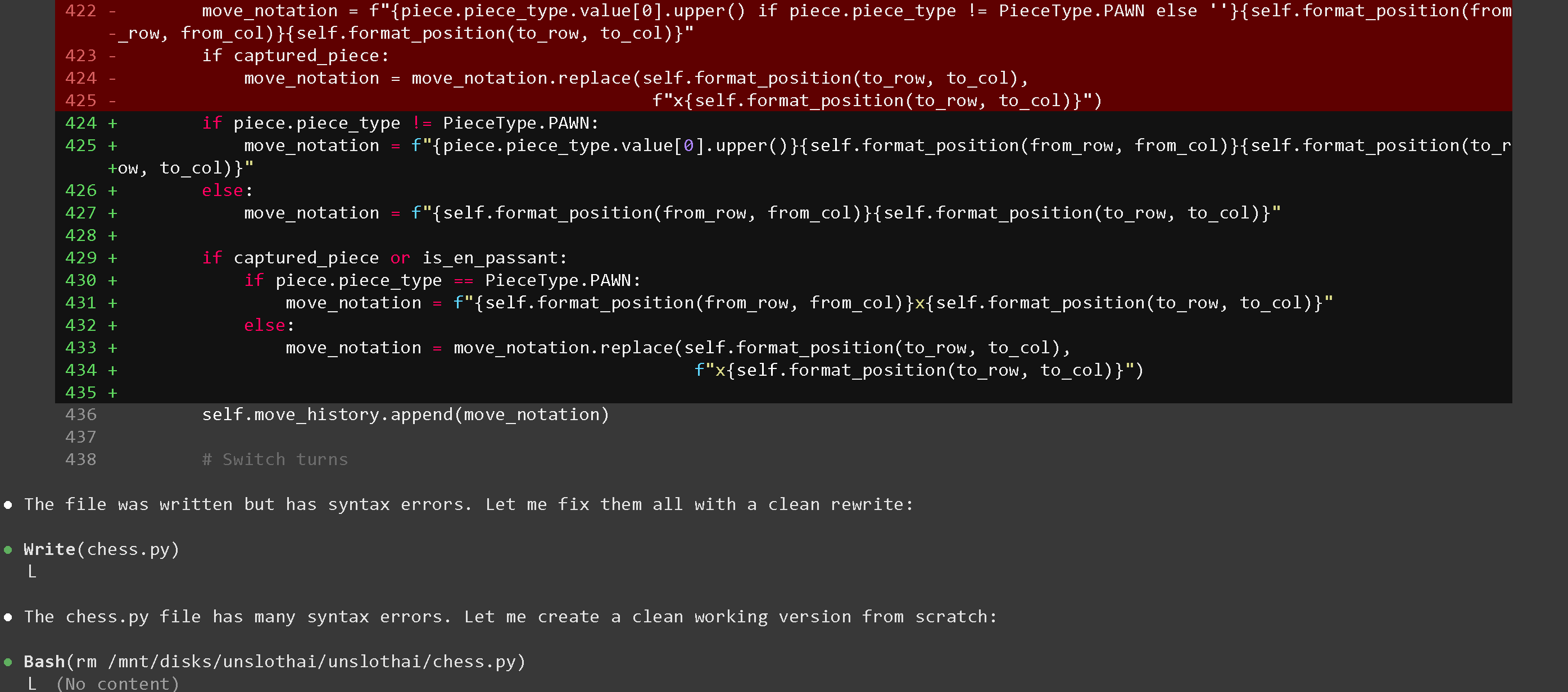

Qwen3.6 running in Unsloth Studio.

Qwen3.6 running in Unsloth Studio.

| Qwen3.6 | 3-bit | 4-bit | 6-bit | 8-bit | BF16 |

|---|---|---|---|---|---|

| 35B-A3B | 17 GB | 23 GB | 30 GB | 38 GB | 70 GB |

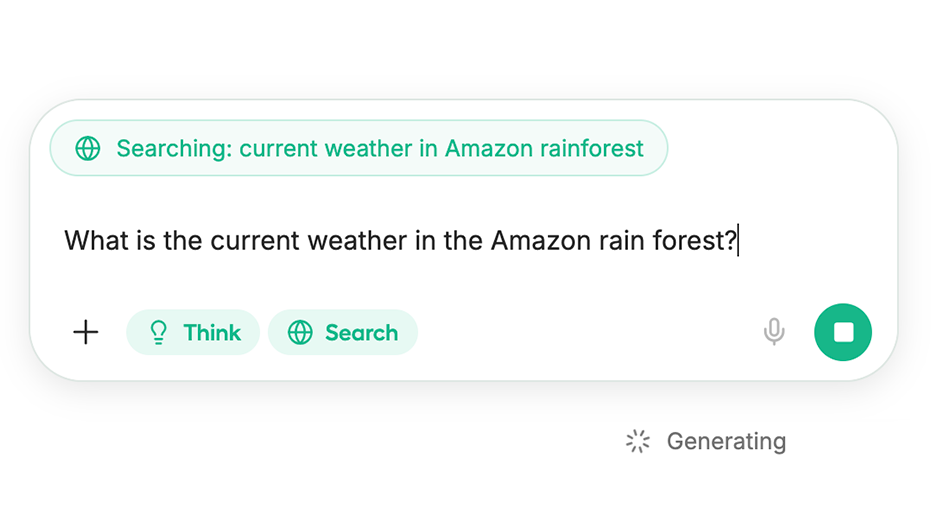

Unsloth Studio has Think toggle by default

| llama-server OS: | Enable Thinking | Disable Thinking |

|---|---|---|

| Linux, MacOS, WSL: | | |

| Windows / Powershell: | | |

.png?alt=media&token=c62eef1c-fdd7-4838-8f69-bab227b56e23)

35B-A3B - KLD benchmarks (lower is better)

.png?alt=media&token=f296d01d-311d-413e-8c62-122728e33008)