{% endhint %}

{% hint style="success" %}

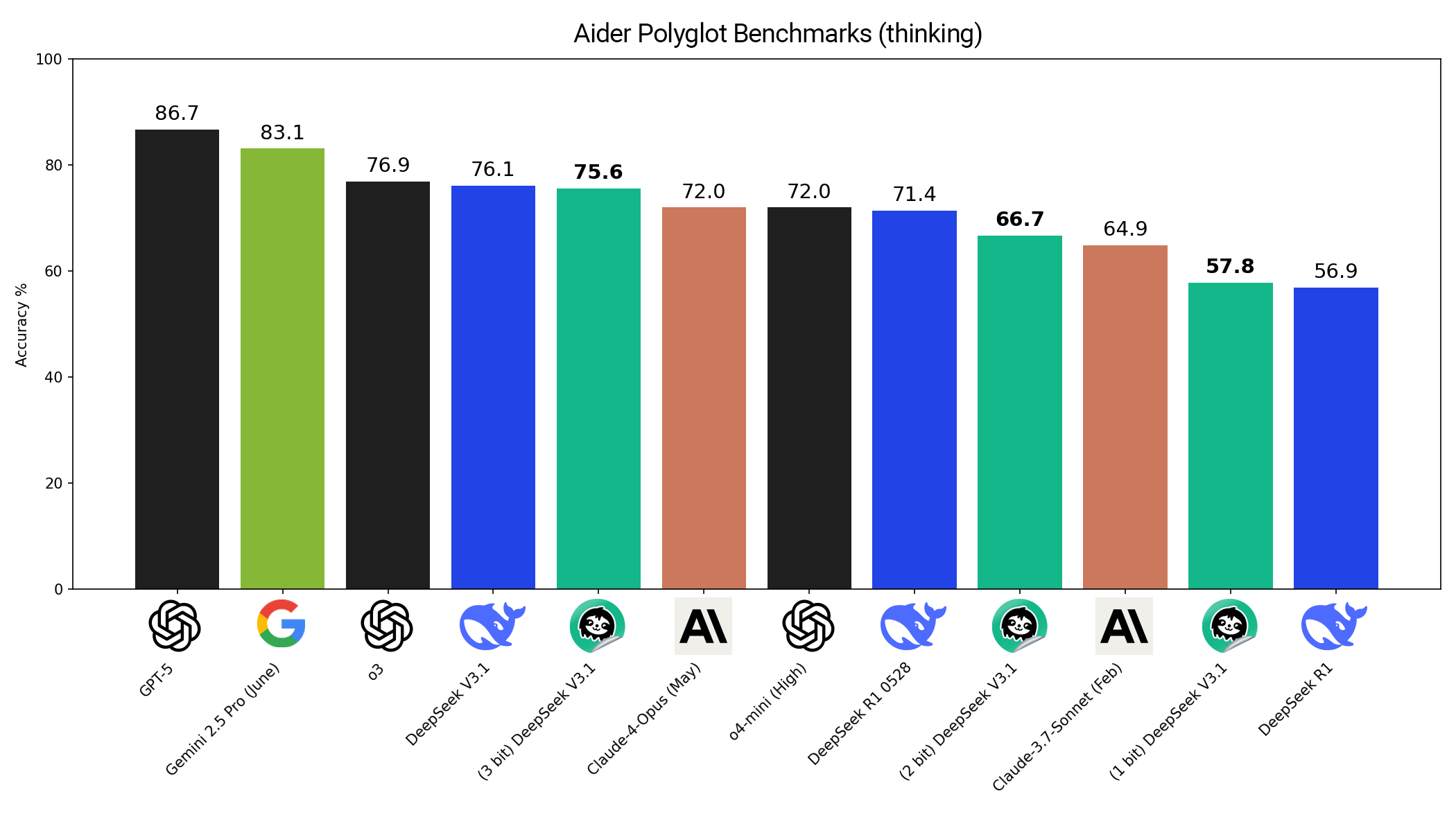

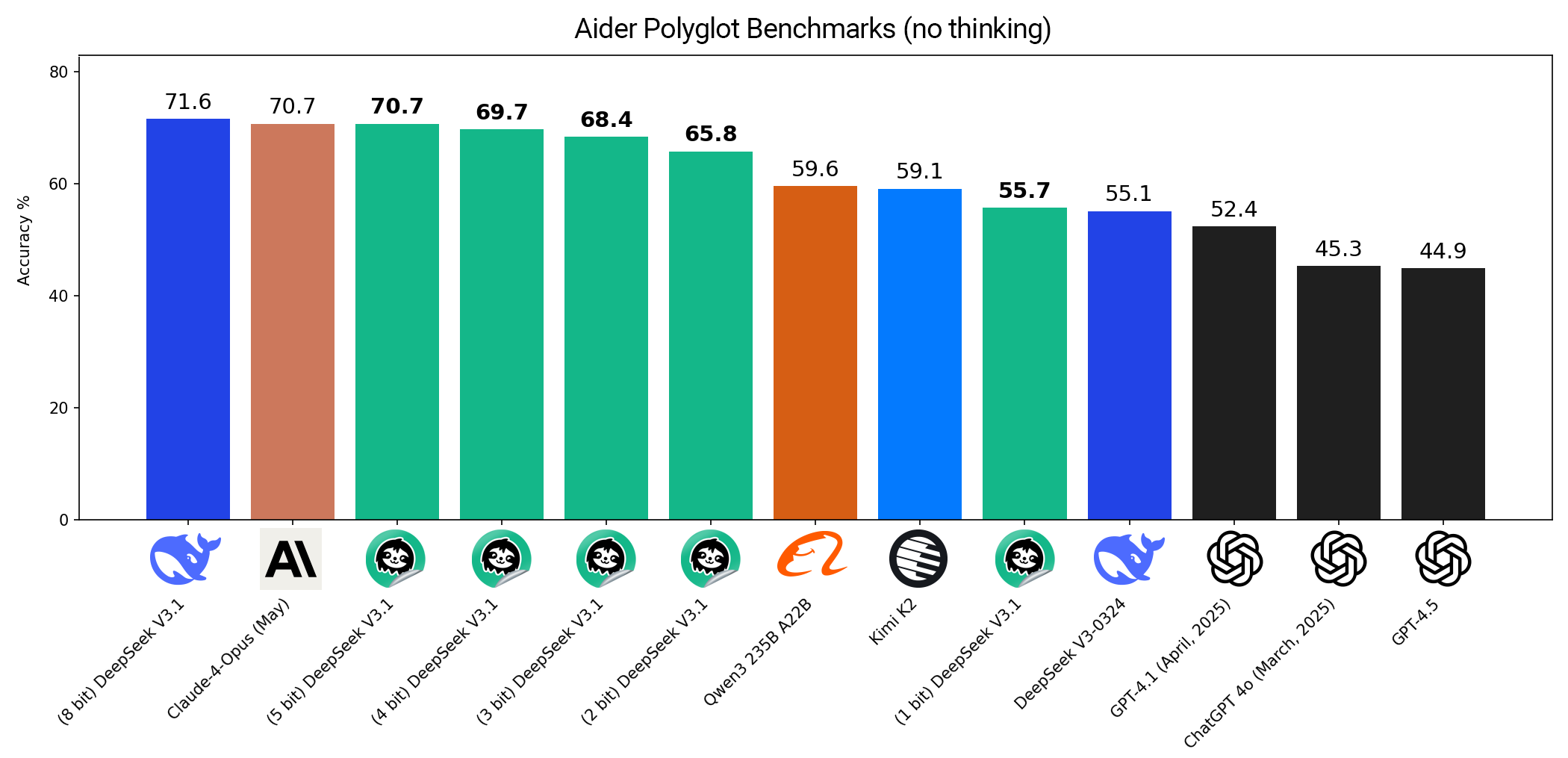

[Sept 10, 2025 update:](https://unsloth.ai/docs/basics/unsloth-dynamic-2.0-ggufs/unsloth-dynamic-ggufs-on-aider-polyglot) You asked for tougher benchmarks, so here's Aider Polyglot results! Our Dynamic 3-bit DeepSeek V3.1 GGUF scores **75.6%**, surpassing many full-precision SOTA LLMs. [Read more.](https://unsloth.ai/docs/basics/unsloth-dynamic-2.0-ggufs/unsloth-dynamic-ggufs-on-aider-polyglot)

{% endhint %}

{% hint style="success" %}

[Sept 10, 2025 update:](https://unsloth.ai/docs/basics/unsloth-dynamic-2.0-ggufs/unsloth-dynamic-ggufs-on-aider-polyglot) You asked for tougher benchmarks, so here's Aider Polyglot results! Our Dynamic 3-bit DeepSeek V3.1 GGUF scores **75.6%**, surpassing many full-precision SOTA LLMs. [Read more.](https://unsloth.ai/docs/basics/unsloth-dynamic-2.0-ggufs/unsloth-dynamic-ggufs-on-aider-polyglot)

{% endhint %}

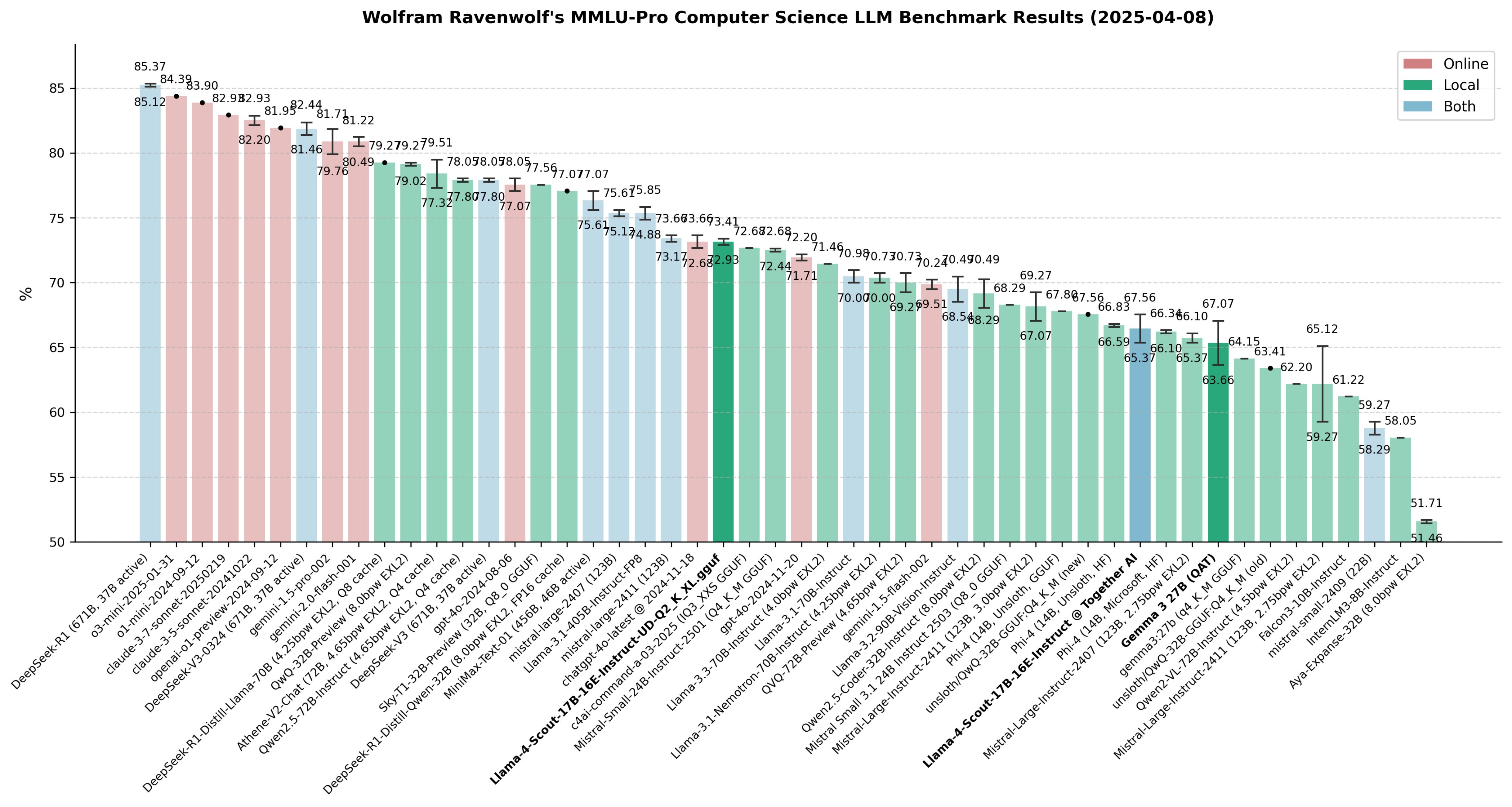

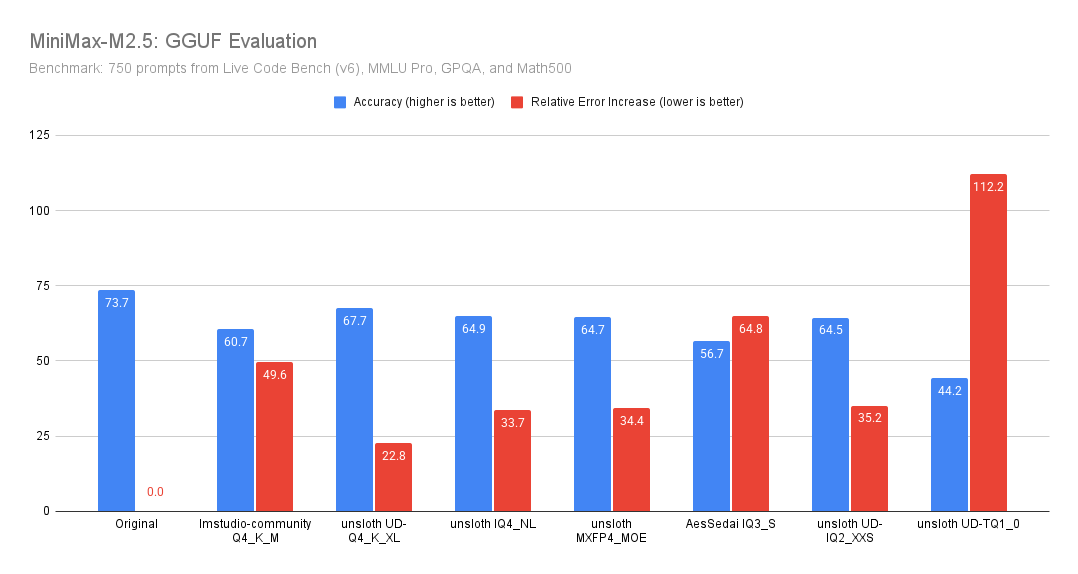

You can also view real-world use-case benchmarks conducted by Benjamin Marie for LiveCodeBench v6, MMLU Pro etc.:

{% endhint %}

You can also view real-world use-case benchmarks conducted by Benjamin Marie for LiveCodeBench v6, MMLU Pro etc.:

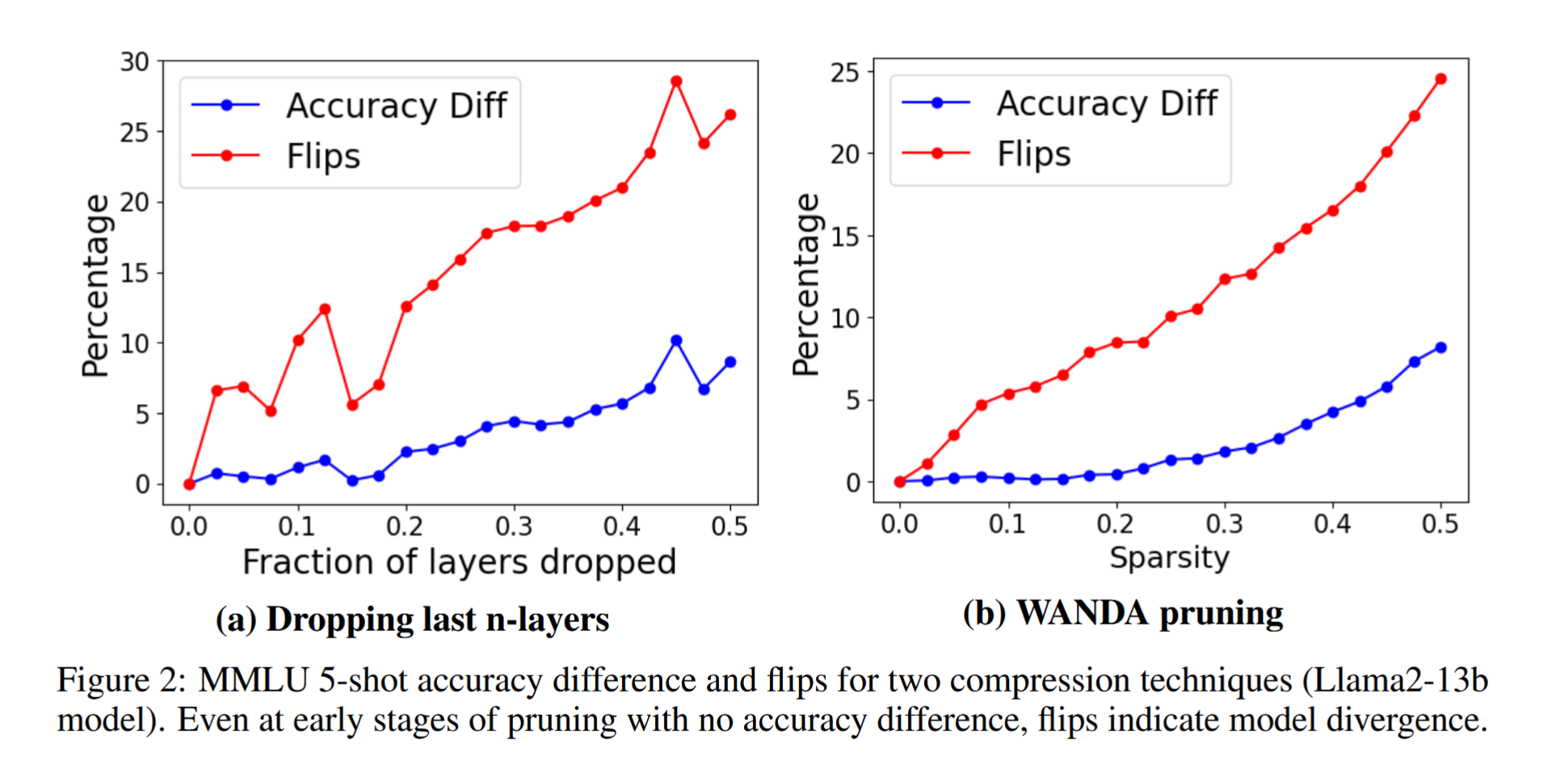

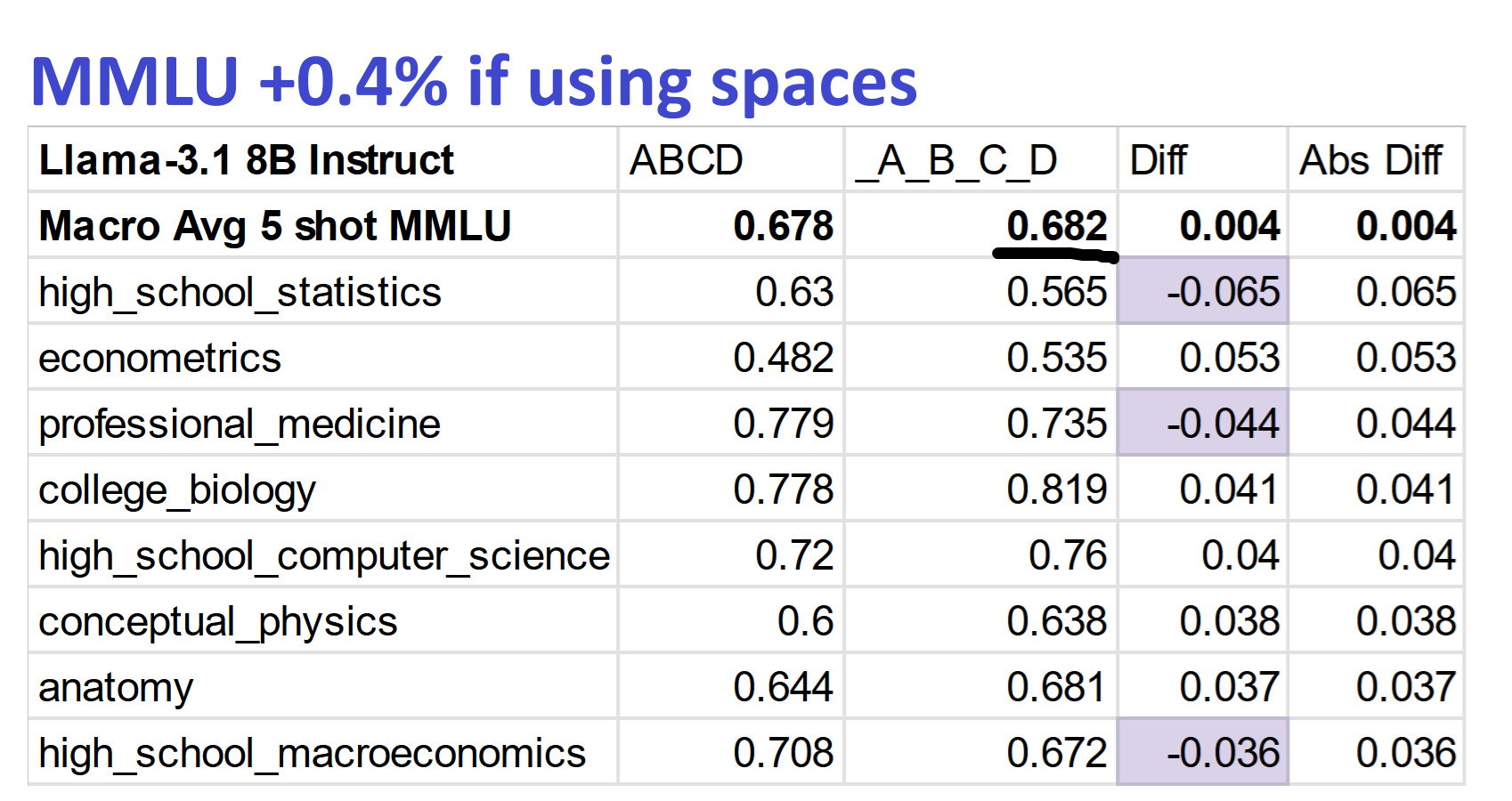

MMLU implementation issues

| Metric | 1B | 4B | 12B | 27B |

|---|---|---|---|---|

| MMLU 5 shot | 26.12% | 55.13% | 67.07% (67.15% BF16) | 70.64% (71.5% BF16) |

| Disk Space | 0.93GB | 2.94GB | 7.52GB | 16.05GB |

| Efficiency* | 1.20 | 10.26 | 5.59 | 2.84 |